AI, Privacy, and Security in the News

Technology rarely stands still, but some weeks highlight just how quickly things are changing. In the past few days alone, major developments have emerged across artificial intelligence, cybersecurity, and digital privacy.

These stories involve some of the largest companies in the tech industry, government agencies, and independent security researchers. While they may appear distant from everyday users, the underlying issues affect how software is secured, how AI systems behave, and how personal data is protected.

Apple Fixes an Actively Exploited macOS Vulnerability

Apple recently released security updates addressing a serious vulnerability affecting macOS and several other Apple operating systems. According to researchers, the flaw could allow attackers to execute malicious code on a device. Apple also confirmed the vulnerability may have already been exploited in real-world attacks.

In cybersecurity, this type of flaw is known as a zero-day vulnerability. The term refers to a security weakness that becomes known to attackers before most users have had the chance to install a patch. Because the vulnerability is new, defensive tools often have little protection against it until updates are released.

Researchers reported that the issue involved dyld, a component responsible for loading applications and system libraries. If successfully exploited, attackers could potentially run unauthorized code on a Mac or other Apple device.

Apple addressed the issue through its latest security updates. For users, the takeaway is simple but important: installing macOS updates promptly remains one of the most effective ways to stay protected.

Why macOS Updates Matter More Than Ever

macOS has long maintained a reputation for strong built-in security. Apple designed the operating system with several layers of protection intended to reduce the risk of malware and unauthorized access.

These protections include:

- Gatekeeper

- System Integrity Protection

- Sandboxing

- XProtect malware detection

- Transparency, Consent, and Control (TCC) privacy protections

As Macs become more widely used, they attract greater attention from attackers. Security researchers are increasingly discovering sophisticated malware designed specifically to bypass Apple’s built-in protections.

Because of this shift, timely updates have become even more critical. Apple frequently patches vulnerabilities discovered by researchers or identified during internal security testing.

Researchers Find New Ways to Bypass Apple Privacy Controls

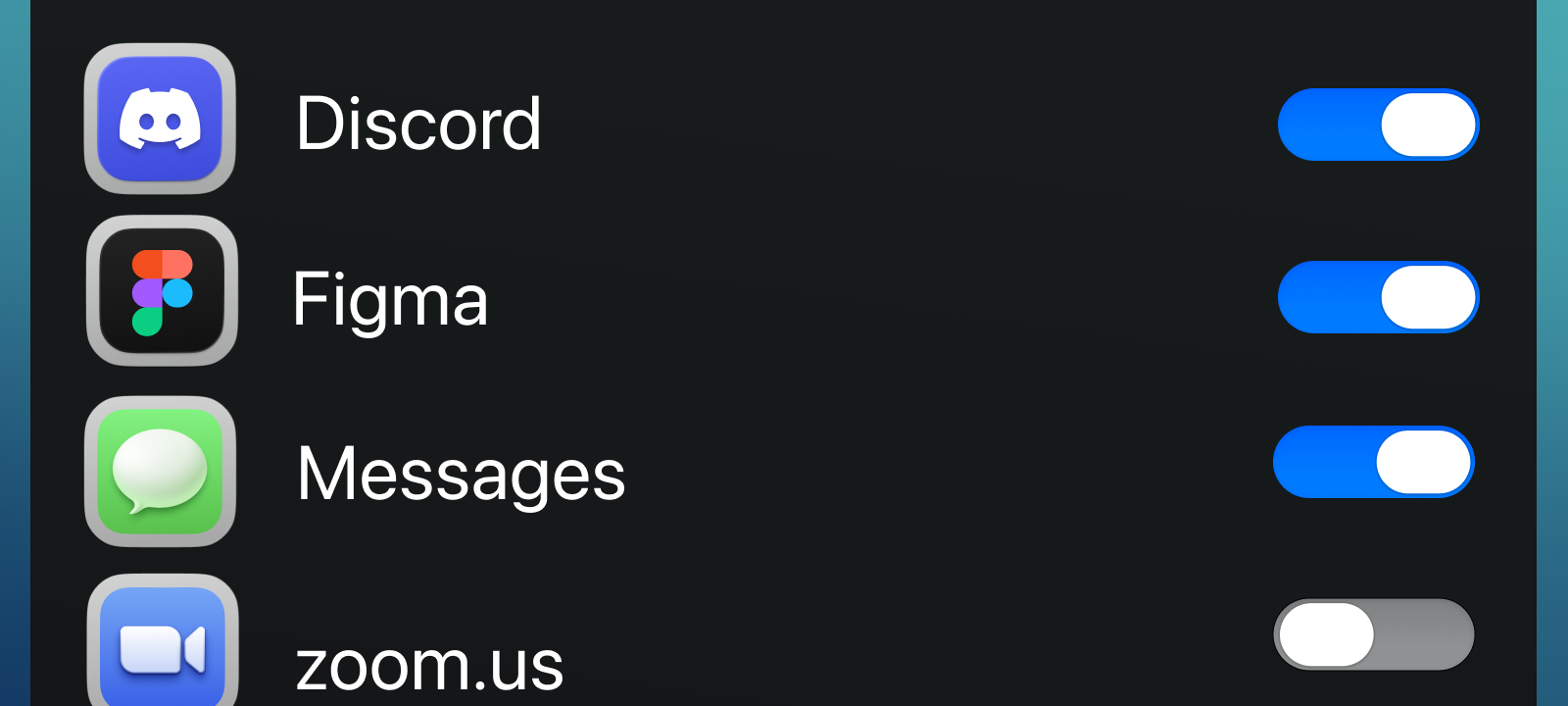

Another recent discovery focuses on Apple’s Transparency, Consent, and Control system, commonly known as TCC.

TCC manages privacy permissions on macOS. When an application attempts to access sensitive resources—such as the microphone, camera, or documents folder—the system presents a permission prompt to the user. Access is granted only after explicit approval.

Researchers studying macOS security recently identified scenarios in which these protections might be bypassed. While these vulnerabilities are often complex and difficult to exploit, studying them allows companies like Apple to strengthen their defenses over time.

OpenClaw and the Rise of Autonomous AI Agents

One of the most talked‑about developments in artificial intelligence involves OpenClaw, an open‑source AI agent system that has grown rapidly in popularity.

Unlike traditional chatbots, AI agents are designed to perform actions rather than simply generate responses. Instead of asking an AI to write text, users can instruct an agent to complete tasks such as:

- Browsing websites

- Sending emails

- Running terminal commands

- Modifying files

- Interacting with applications

These systems combine language models with tools that allow them to interact directly with computers and online services. While the concept is powerful, it also introduces new security risks.

Security Researchers Raise Alarms About OpenClaw

Security researchers examining OpenClaw deployments have raised several concerns. Some installations have reportedly been exposed on the public internet, allowing attackers potential access to the AI agent’s control interface.

Because agents often have broad permissions—including access to files, system commands, and APIs—an attacker who gains control of the agent could effectively control the system itself.

Researchers have also identified vulnerabilities within parts of the OpenClaw ecosystem, including issues that could allow remote code execution or data exposure. Additional studies examining AI agent “skills” have found that some extensions contain vulnerabilities or suspicious behavior.

Tech Companies Begin Restricting OpenClaw

Because of these risks, some companies have begun restricting the use of OpenClaw and similar agentic AI tools inside corporate environments.

Security teams worry that powerful AI agents may be deployed too quickly without sufficient safeguards. As a result, several organizations have limited or banned experimental AI agents from internal networks until stronger security practices are developed.

The Anthropic and Pentagon Dispute

Another major story in the AI world involves Anthropic, the company behind the Claude AI system.

Anthropic had previously collaborated with the U.S. Department of Defense on artificial intelligence projects. However, reports indicate the Pentagon sought broader permissions for certain military applications of the technology.

Anthropic reportedly refused to allow its models to be used for activities such as autonomous weapons or mass surveillance. The disagreement escalated when the Pentagon designated the company as a supply‑chain security risk, preventing government contractors from working with it.

Anthropic has filed lawsuits challenging the decision, arguing that the designation is unfair and damaging to the company.

OpenAI Steps Into the Pentagon Contract

Following the dispute, OpenAI reportedly secured a Pentagon contract to provide AI technology for military systems.

The move sparked debate across the AI industry. Some critics argue that AI companies should strictly control how their models are used, especially in military contexts. Others believe cooperation with government agencies is necessary for national security.

The situation highlights how rapidly artificial intelligence is becoming intertwined with national security, surveillance capabilities, and geopolitical strategy.

Why These Stories Matter for Everyday Users

Although stories about government contracts and AI research may seem distant from everyday users, they reveal important trends shaping modern technology.

Artificial intelligence is becoming deeply integrated into many systems. At the same time, the security challenges surrounding AI are growing quickly. Governments are also becoming increasingly involved in how AI technologies develop and are regulated.

For consumers, understanding these changes helps them make more informed choices about the technology they rely on.

The Bigger Picture

All of these developments point to a broader shift. Artificial intelligence, cybersecurity, and privacy are becoming tightly interconnected.

Operating systems must adapt to new threats. AI systems must be secured against misuse. Governments must determine how powerful technologies should be regulated.

For individuals, the core security practices remain the same:

- Keep software updated

- Use trusted applications

- Be cautious with experimental tools

- Protect personal data

Security is not a single feature—it is an ongoing process. The events unfolding today will shape how the digital world evolves in the years ahead.